You’ve probably seen the ads. A friendly-looking purple bubble asks how you’re feeling, or a minimalist app promises to "cure" your stress with three minutes of algorithmic breathing. It’s easy to be cynical. For years, the intersection of artificial intelligence and mental health felt like a glorified choose-your-own-adventure book. It was scripted, clunky, and honestly, a bit insulting when you were in the middle of a real crisis.

But something shifted recently. We’ve moved past the era of "I don't understand that, would you like to see a breathing exercise?" Today, AI is being used to support positive mental health in ways that actually feel human. It isn’t replacing therapists—thankfully—but it’s filling the massive, terrifying gaps in a healthcare system that’s currently failing millions of people.

If you've ever waited six months for an intake appointment, you know exactly what I mean.

The end of the 2 a.m. crisis void

The biggest win for AI in this space isn't complexity. It's availability. Mental health struggles don't keep office hours. Panic attacks don't wait for a 10 a.m. Tuesday slot.

When you’re spiraling at 2 a.m., a human therapist is asleep. Your friends might be too. This is where Large Language Models (LLMs) changed the game. Unlike the old-school bots, modern AI can actually follow the nuance of a conversation. It understands subtext. If you tell a modern AI tool you're feeling "burnt out," it doesn't just define the word. It asks about your specific pressure points.

Apps like WoeBot and Wysa use Cognitive Behavioral Therapy (CBT) frameworks to help users reframe negative thoughts in real-time. They aren't just "chatting." They’re performing a digital version of "thought catching." This helps stop a bad night from turning into a bad week. It’s about bridge-building. The AI holds your hand until you can get to a human professional.

Detecting the invisible signals

Sometimes, we’re the last people to realize our mental health is tanking. We ignore the fatigue. We chalk up the irritability to a bad day. AI doesn't have those blind spots.

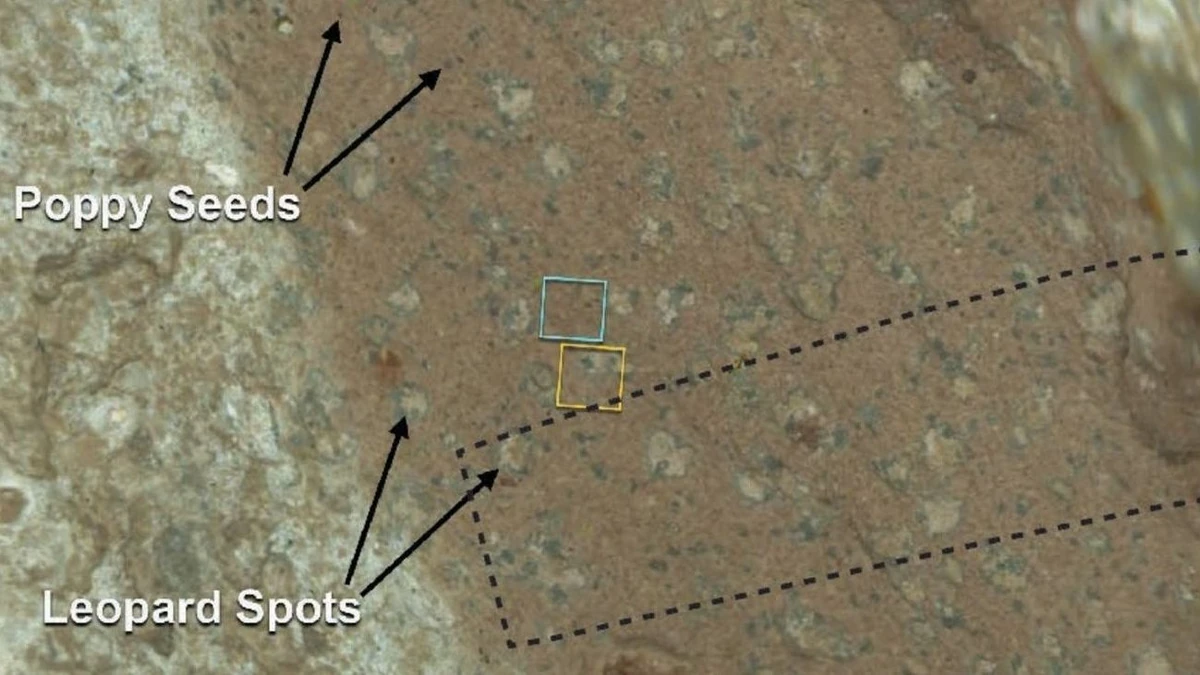

Researchers at institutions like Harvard and MIT have been working on "digital phenotyping." This is a fancy way of saying that the way you use your phone can predict your mood. It’s not about reading your texts—that’s a privacy nightmare—but about patterns.

How fast are you typing? Are you scrolling through social media at 3 a.m. when you usually sleep? Is your GPS showing that you haven't left the house in four days?

Algorithms can spot these deviations long before you notice them. For people living with Bipolar Disorder, this is a literal lifesaver. An AI can detect the subtle shift in speech patterns or activity levels that signal an upcoming manic or depressive episode. It can then alert the user or their care team. It turns mental healthcare from reactive—fixing things once they break—to proactive.

Why your therapist might start using an AI assistant

There’s a massive misconception that AI is trying to kick therapists out of their chairs. It’s the opposite. AI is actually being used to make therapists better at their jobs.

Think about the sheer amount of grunt work a psychologist does. They have to take notes, track themes across months of sessions, and stay up to date on every new piece of clinical research. It’s exhausting.

New tools like Lyssn are now being used to analyze therapy sessions (with consent). The AI reviews the transcript and identifies whether the therapist is actually using evidence-based techniques like Motivational Interviewing. It’s a quality control tool. It helps clinicians see their own patterns and improve.

Then there’s the "paperwork problem." Many therapists spend 20% of their week on clinical notes. AI can now summarize a session in seconds, allowing the human to spend more time actually looking at the patient instead of a screen. Better-rested, less-stressed therapists lead to better outcomes for everyone.

VR and the death of the "scary" doctor's office

Positive mental health isn't just about talking. Sometimes it’s about doing.

Virtual Reality (VR) powered by AI is currently the gold standard for treating PTSD and phobias. In the past, "exposure therapy" required a patient to imagine their trauma. That’s hard. It’s imprecise.

With AI-driven VR, a veteran can revisit a specific environment in a controlled, safe way. The AI adjusts the intensity of the simulation based on the patient's heart rate and skin conductance in real-time. If the person gets too distressed, the AI dials it back. If they’re too comfortable, it pushes them just enough to facilitate healing. This kind of precision was impossible five years ago.

The messy ethics we can’t ignore

I’m not going to sit here and tell you it’s all sunshine and rainbows. There are deep, dark risks to letting an algorithm into your psyche.

The biggest issue is data privacy. Your mental health data is the most intimate information you own. If an app gets hacked, or if they sell your "mood data" to advertisers, the consequences are devastating. We’ve already seen cases where "support" bots gave questionable advice because they were trained on biased data sets.

We also have to talk about the "empathy gap." An AI can simulate empathy. It can say, "I'm sorry you're feeling that way." But it doesn't feel anything. For some people, knowing there’s no soul on the other side of the screen makes the interaction feel hollow. It can even increase feelings of isolation if the user starts preferring the bot over real human connection.

AI as a tool for neurodiversity

One of the most overlooked ways AI supports positive mental health is through executive function assistance. For people with ADHD or Autism, the "mental load" of daily life is a constant source of anxiety.

AI tools that can summarize long emails, break down massive projects into tiny steps, or remind you to drink water are mental health tools. They reduce the friction of existing in a world that wasn't built for neurodivergent brains. When you take away the shame of "forgetting" or being "unorganized," the underlying anxiety often dissipates.

How to actually use this stuff

If you’re looking to integrate AI into your own mental health toolkit, don't just download the first app you see in the App Store. Be picky.

First, check the privacy policy. If they don't explicitly state that your data is encrypted and not for sale, delete it. Second, look for apps that are "clinically validated." This means they’ve actually been through a study and weren't just built by three guys in a garage.

Start small. Use an AI journal like Reflection.app or a CBT bot like Wysa to track your moods for a week. See if the patterns it identifies actually resonate with you. Use it as a supplement, not a replacement.

The goal isn't to have a robot best friend. The goal is to use these tools to understand your own mind well enough that you don't need the tools quite as much. AI is the training wheels, not the bike.

Go into your settings today and look at your screen time or health data. That's the raw material for your own digital phenotype. If you see your sleep patterns or social usage shifting, don't wait for an app to tell you something is wrong. Take that data to a real human and start the conversation there.