The Department of Defense (DOD) designation of Anthropic as a supply chain risk, occurring simultaneously with reports of Claude's utilization within Iranian borders, exposes a fundamental decoupling between AI safety research and the realities of global dual-use technology distribution. This friction is not merely a failure of "Know Your Customer" (KYC) protocols; it is a structural byproduct of the API-based distribution model which prioritizes low-friction scalability over national security provenance.

The current crisis underscores a three-part failure in the "Compute-Model-Access" stack. First, the hardware dependency on globalized semiconductor chains creates a physical vulnerability. Second, the weights and parameters of the models themselves are treated as intellectual property rather than strategic assets. Third, the access layer—the API—is fundamentally incapable of enforcing geographic or jurisdictional boundaries against determined state actors using sophisticated obfuscation techniques.

The Triad of AI Supply Chain Vulnerability

To quantify the risk associated with a Tier-1 AI developer like Anthropic, we must analyze the supply chain through three distinct vectors: upstream dependencies, internal development integrity, and downstream leakage.

Upstream Hardware and Data Provenance

The DOD's classification hinges on the concentration of compute power. When a company relies on a narrow set of GPU clusters—primarily NVIDIA H100s or B200s—the physical security of those data centers and the provenance of the capital used to lease them become points of failure. If the capital stack includes international investment from entities with ties to adversarial nations, the "cleanliness" of the model is compromised before a single line of code is written.

Internal Logic and Alignment Integrity

The "Constitutional AI" approach pioneered by Anthropic is designed to bake safety into the model’s core logic. However, from a defense perspective, this creates a "black box" risk. If the alignment parameters can be bypassed or "jailbroken" by foreign intelligence services, the model shifts from a productivity tool to a high-capacity engine for disinformation or cyber-warfare. The DOD views this as a supply chain risk because the "product" (the model) contains latent capabilities that the manufacturer cannot fully disable once deployed.

Downstream Access Leakage

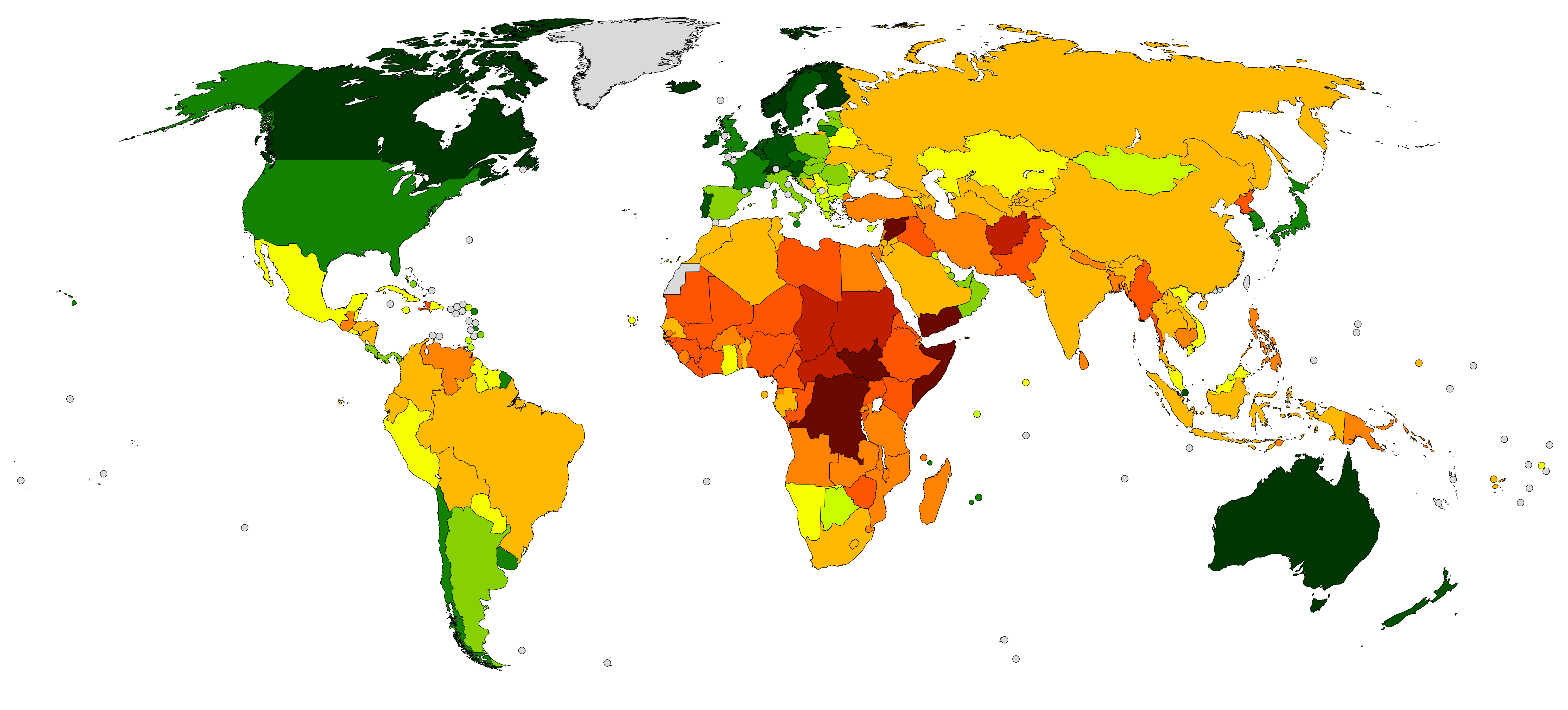

The report of Claude being used in Iran illustrates the "Last Mile" problem. While Anthropic may comply with OFAC sanctions and implement geofencing, these measures are easily circumvented via:

- Residential Proxy Networks: Masking traffic through legitimate consumer IP addresses within the US or EU.

- Third-Party Integrations: Accessing Claude through shell companies or "gray market" API aggregators that do not enforce the same level of scrutiny as the primary provider.

- Model Distillation: Using the API to generate high-quality synthetic data to train smaller, localized models within Iran, effectively "stealing" the intelligence of the model without maintaining a persistent connection.

The Economic Incentive of Proliferation vs. National Security

The primary driver of this supply chain risk is the venture-backed mandate for hyper-growth. AI labs operate under a burn rate that necessitates rapid market capture. This creates a perverse incentive to minimize friction in the onboarding process.

The Friction-Security Tradeoff

Every additional layer of identity verification (Identity-as-a-Service or IDaaS) added to an API endpoint increases the "time-to-value" for legitimate developers. In a competitive market where OpenAI, Google, and Meta are vying for dominance, Anthropic faces a prisoner's dilemma: implement rigorous, defense-grade KYC and lose market share to less restrictive competitors, or maintain a "low-gate" entry and risk the exact DOD designation they have now received.

The DOD’s intervention signals a shift in the regulatory environment where AI is no longer treated as a software-as-a-service (SaaS) product, but as a dual-use commodity similar to high-grade carbon fiber or centrifugal components for nuclear enrichment.

Quantifying the Iran Breach: A Case Study in Asymmetric Utility

The presence of Claude in Iran is not an accidental login by a student; it represents the acquisition of high-level reasoning capabilities by a sanctioned state. The utility of an LLM for an adversarial actor is calculated by the Asymmetric Knowledge Gain (AKG) formula:

$$AKG = \frac{OutputComplexity}{InputEffort} \times ResourceAccess$$

For a nation under heavy sanctions, the ability to generate sophisticated code for industrial control systems or to translate complex technical documents into Farsi provides a massive leap in capability for minimal cost. The "supply chain risk" is that Anthropic’s infrastructure is inadvertently subsidizing the R&D of the Iranian state.

The Mechanism of Shadow Access

The Iranian breach likely utilized a "Cascading API" strategy. An entity in a neutral jurisdiction (e.g., the UAE or Turkey) sets up a legitimate front company, secures an enterprise contract with Anthropic, and then builds a proprietary wrapper. This wrapper is then sold as a "localized AI solution" to Iranian entities. This creates a layer of plausible deniability for the middleman and a blind spot for the provider.

The DOD Response: From Procurement to Exclusion

The DOD’s move to label Anthropic as a risk suggests a transition toward a "Clean Compute" standard. This standard would require:

- Hardware-Level Attestation: Proof that the model is running on specific, audited silicon.

- Continuous Monitoring: Real-time analysis of API prompts to identify patterns consistent with state-sponsored research or cyber-reconnaissance.

- Sovereign Hosting: Forcing AI labs to maintain entirely separate, air-gapped instances for government work, distinct from the commercial API used by the general public.

The cost of compliance for these measures is non-trivial. It forces a bifurcated development path: one for "Safe" commercial use and one for "Secure" government use. This division dilutes the data flywheels that these companies rely on to improve their models.

Strategic Realignment of AI Distribution

The current model of "Model-as-a-Service" is fundamentally incompatible with the requirements of the 21st-century defense industrial base. To mitigate the risks identified by the DOD, the industry must move toward a Federated Provenance Model.

1. Hardened API Gateways

Moving beyond IP-based geofencing to hardware-based "Proof of Personhood." This involves requiring cryptographic keys stored on physical devices (such as YubiKeys) for high-volume API access, tied to verified corporate identities.

2. Prompt-Level Anomaly Detection

Developing "Security-Aware Alignment" where the model itself acts as a sensor. If a cluster of prompts from a specific region or account begins to map out the vulnerabilities of US power grids or chemical processing plants, the system must trigger an automated "kill switch" and report the telemetry to the Cybersecurity and Infrastructure Security Agency (CISA).

3. Financial Integration as Verification

Aligning API access with the SWIFT banking system. By only accepting payments from verified institutional banks in non-sanctioned jurisdictions, AI providers can use the existing global financial "Know Your Customer" infrastructure to act as a secondary filter.

The Inevitability of Bounded AI

The Anthropic/DOD friction confirms that the era of borderless, universal AI access is ending. We are entering a period of "AI Mercantilism," where the strategic value of a model is guarded as fiercely as its source code. The paradox is that the more "human-centric" and "safe" a model becomes, the more useful it is for an adversary to weaponize for social engineering and strategic deception.

The strategic play for AI firms is no longer just "scaling laws" and parameter counts; it is the development of a geopolitical defense layer. This requires a shift in engineering resources from pure model performance to the creation of a "Verified Access Stack." Companies that fail to build this layer will find themselves excluded from the most lucrative and stable contracts in the world—those of the global defense and intelligence communities.

Integrate a Chief Security Officer with direct reporting lines to both the CEO and a government liaison board. This role must have the authority to throttle or terminate commercial access points that show statistical signatures of adversarial state usage, regardless of the impact on quarterly revenue targets. The long-term valuation of these companies will be tied to their status as trusted national assets, not just successful software vendors.