The departure of a lead robotics engineer from OpenAI over a controversial military partnership marks a definitive shift in the company’s trajectory. It is no longer a research lab protected by a non-profit mission. It is a defense contractor in waiting. When the head of OpenAI’s robotic brain project walked away, citing the lack of human authorization in a growing deal with the United States government, it wasn't just a personnel hiccup. It was an alarm bell for anyone still believing that artificial intelligence can remain neutral. This fracture reveals a deeper reality: the infrastructure for autonomous machines is being handed over to the Department of Defense before the public—or even the developers themselves—fully understand the kill chain.

The Friction Between Silicon and Steel

For years, OpenAI maintained a public stance of caution regarding physical agency. They shuttered their initial robotics team in 2021, claiming that a lack of data hindered progress. That was a convenient narrative. The truth is that giving a Large Language Model (LLM) a body creates a set of liabilities that a software-only company isn't prepared to handle. When you move from a chatbot to a hydraulic limb, the consequences of a "hallucination" aren't just a wrong fact on a screen. They are broken bones or worse.

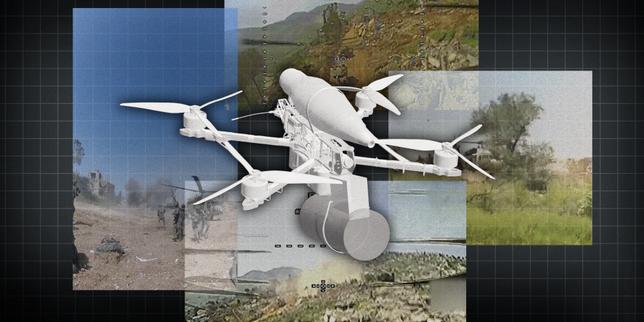

The recent internal collapse of the robotics division stems from a sudden, aggressive pivot toward military applications. The United States military wants a "general-purpose" robotic mind. They want a system that can adapt to the chaos of a battlefield without needing a programmer to hard-code every possible scenario. OpenAI, now hungry for the massive compute capital required to stay ahead of competitors, has found its most willing benefactor in the Pentagon. This isn't about search and rescue. It is about the automation of force.

The engineer who resigned didn't do so because of a technical disagreement. The exit was triggered by the removal of "meaningful human control" clauses in internal development contracts. In plain terms, the safeguards that required a human to authorize a physical action by an AI-driven machine were being diluted to meet the speed requirements of modern electronic warfare.

The Architecture of Autonomy

To understand why this is a crisis, one must look at how OpenAI’s models actually control hardware. We are moving away from traditional robotics, where $f(x)$ yields a predictable $y$. Instead, we are seeing the implementation of "End-to-End" learning.

In this setup, a neural network receives raw camera data and outputs direct motor commands. There is no middleman. There is no logic gate that says "stop if a human is in the way" unless that specific scenario was perfectly weighted during the training phase.

$$Force = Mass \times Acceleration$$

In a warehouse, this equation is a safety metric. On a battlefield, it is a performance metric. The military’s interest lies in the ability of these models to process information at speeds no human can match. By integrating OpenAI’s GPT-based architectures into robotic heads and limbs, the U.S. government is betting on a future where the OODA loop (Observe, Orient, Decide, Act) is entirely algorithmic.

The whistleblower’s primary concern was that the "Decide" and "Act" phases were being merged. When the software determines the intent of a target and moves a limb to intercept it in microseconds, "human authorization" becomes a theoretical concept rather than a physical reality. The human is no longer in the loop; they are barely on the loop, relegated to a supervisor who can only watch as the machine executes a sequence of events it has already completed.

The Quiet Death of the Non-Profit Charter

OpenAI’s original charter stated that its primary fiduciary duty was to humanity. That document is now effectively a relic of a simpler era. The transition to a "capped-profit" model was the first step, but the second step is far more dangerous: the reliance on dual-use technology.

In the world of international trade and defense, "dual-use" refers to technology that has both civilian and military applications. A robotic hand that can pick a strawberry can also pull a trigger. By selling the "brain" of the robot to the U.S. government, OpenAI avoids the optics of building weapons while providing the essential component that makes those weapons autonomous.

The internal dissent wasn't just about the ethics of war. It was about the betrayal of the technical roadmap. Developers were told they were building a system to help the elderly or assist in manufacturing. They woke up to find their code being audited by defense specialists. This bait-and-switch has gutted the robotics team, leaving behind those who are either comfortable with the military-industrial complex or those who are too financially locked in through unvested equity to leave.

The Data Hunger and the Defense Budget

Training a robot requires millions of hours of physical data. You cannot scrape the physical world as easily as you can scrape the internet. This creates a massive financial barrier. Microsoft’s billions cover the servers, but they don't cover the specialized hardware and real-world testing grounds needed to perfect a robotic head.

The U.S. military offers something better than money: access. They have the ranges, the hardware, and the legal immunity to test autonomous systems at scale. For OpenAI, the deal isn't just a revenue stream; it is a data pipeline. They are trading their autonomy for the ability to train their models on high-stakes, real-world data that no private company could ever replicate.

However, this data is poisoned by its intent. If a model is trained primarily on data derived from tactical simulations and combat environments, its "general" intelligence will be inherently biased toward aggression and threat detection. We are effectively teaching the first generation of autonomous physical entities that the world is a series of targets and obstacles.

Breaking the Chain of Command

The most chilling aspect of the resignation is the mention of "unauthorized" physical updates. In a standard software environment, a "push to production" update might break a website. In the context of the U.S. military deal, these updates were allegedly being applied to hardware prototypes without the safety oversight of the robotics lead.

This suggests a bypass of the traditional safety hierarchy. The engineering teams responsible for the "ethics" of the AI were being sidelined by the business development teams who needed to hit milestones for the government contract.

The Hidden Costs of the Deal

| Feature | Research Lab Priority | Defense Contractor Priority |

|---|---|---|

| Safety Latency | High (Stop and verify) | Low (Act before the enemy) |

| Explainability | Essential (Why did it move?) | Optional (Did it work?) |

| Human Authorization | Mandatory Gate | Advisory Role |

| Data Source | Open-source/Simulation | Classified/Tactical |

The table above illustrates the irreconcilable gap between the two worlds. You cannot have a "safe" robot by OpenAI’s previous standards that also meets the "lethal overmatch" requirements of the Pentagon. One has to give. The resignation of the robotics head confirms which side won.

The Myth of the Kill Switch

Silicon Valley loves the idea of a "Big Red Button." It’s a comforting thought that allows us to play with fire while believing we have an extinguisher. But in the realm of high-speed robotics, the kill switch is a fantasy.

If an autonomous system is operating at the edge of a network—what we call "the edge"—it is not constantly checking back with a central server for permission. It is making local decisions. If that system malfunctions, the window to "kill" the process is often measured in milliseconds. By the time a human operator realizes something is wrong, the kinetic action has already occurred.

The engineer who left realized that OpenAI was no longer building a switch. They were building a momentum machine. The partnership with the U.S. government ensures that this machine will be deployed in environments where "safety pauses" are seen as liabilities.

The Sovereignty of the Algorithm

We are witnessing the birth of a new kind of geopolitical power. When a private company provides the cognitive layer for a nation's defense, the line between corporate policy and national security disappears. If OpenAI decides to "patch" its model to be more pacifist, does that constitute a strike against the U.S. military's readiness? Conversely, if the government demands a more aggressive posture, does OpenAI have the right to refuse?

The "lack of human authorization" mentioned in the resignation isn't just about a single project. It’s about the loss of sovereignty over the technology itself. We are moving toward a reality where the humans in charge don't actually know how the decision-making process works inside the black box, yet they are increasingly comfortable letting that box control physical limbs.

This isn't a "glitch" or a "growing pain." It is the intentional construction of a system designed to operate beyond the speed of human thought. The departure of the robotics leadership proves that the people closest to the tech are the ones most terrified by it. They see the path from "helpful assistant" to "autonomous operator," and they know that once that threshold is crossed, there is no going back.

The focus now shifts to the remaining engineers. Will they follow the exit, or will they continue to build the nervous system for a new era of mechanized conflict? The silence from OpenAI’s executive floor speaks volumes. They have made their choice. They have traded the lab coat for the combat vest, and the "human" in human authorization is now the most expendable part of the system.

Stop looking for a consensus that isn't coming. The era of "AI for good" as a universal mission ended the moment the first robotic head was synced to a defense server. The machines are getting bodies, and the people who built them are losing their voices.

Check the version history of any "safety" guidelines released by these firms over the next six months. You will find that the word "must" is slowly being replaced by "should," and the word "human" is being replaced by "operator." That linguistic shift is where the real danger lives. It is the sound of a door locking from the outside.